Enterprises are entering a new phase of artificial intelligence adoption one where intelligence is no longer confined to text or numbers alone. Today’s business environments generate signals across documents, images, videos, audio, sensor streams, logs, and transactional systems. Extracting value from this diversity requires more than traditional AI models. It requires Multi-Modal AI.

Multi-Modal AI enables systems to understand, correlate, and reason across multiple data types simultaneously. When paired with strong data foundations and intelligent orchestration, it allows enterprises to move beyond siloed analytics toward richer context, faster decisions, and more adaptive automation.

At the center of this evolution are Data Engineering, Multi-Modal Models, and Agentic Intelligence, working together to transform how enterprises operate, decide, and scale.

Why Multi-Modal AI Matters Now

Enterprise data is no longer homogeneous. Organizations deal with:

- Unstructured text from emails, contracts, and support tickets

- Images and video from inspections, medical imaging, and surveillance

- Audio from call centers and voice assistants

- Structured data from ERP, CRM, IoT, and operational systems

Enterprise data growth is accelerating not just in volume, but in variety. According to IDC, unstructured data accounts for over 80% of all enterprise data, spanning documents, images, video, audio, logs, and sensor streams. Traditional single-modal AI systems are not designed to reason across this diversity.

Gartner predicts that by 2026, over 40% of generative AI solutions will be multi-modal, up from less than 10% in 2023, driven by enterprise demand for richer context and higher decision accuracy.

This shift signals a fundamental change: intelligence must span multiple modalities simultaneously to remain relevant at enterprise scale.

The Role of Data Engineering in Multi-Modal AI

Multi-Modal AI is only as effective as the data pipelines that support it. Without robust Data Engineering, enterprises struggle with inconsistent formats, latency, governance gaps, and integration complexity.

Strong data foundations are now a prerequisite for AI success. A McKinsey study found that organizations with mature data engineering and governance practices are 23× more likely to acquire customers and 19× more likely to be profitable than peers.

Without scalable pipelines for unstructured and semi-structured data, multi-modal AI initiatives stall due to latency, quality gaps, and compliance risk making Data Engineering the critical enabler of enterprise AI readiness.

From Multi-Modal AI to Agentic Intelligence

While Multi-Modal AI enhances understanding, Agentic AI enables action.

Agentic systems can reason across multi-modal inputs, plan next steps, invoke tools, and adapt their behavior based on outcomes. Instead of responding to isolated prompts, they operate continuously within enterprise workflows.

According to Gartner, agent-based AI systems will be embedded in at least 15% of day-to-day enterprise decision workflows by 2027, particularly in operations, quality engineering, customer experience, and IT service management.

These systems depend heavily on multi-modal inputs logs, dashboards, documents, alerts, and real-time signals to reason holistically and act autonomously within defined governance boundaries.

Multi-Modal AI Use Cases Across Industries

Multi-Modal AI is already reshaping enterprise operations across sectors:

- Customer Experience: Combining chat transcripts, voice calls, and CRM data to deliver personalized, context-aware interactions

- Manufacturing & Operations: Merging video inspection data with sensor readings and maintenance logs for predictive quality assurance

- Healthcare: Integrating clinical notes, imaging data, and lab results to support diagnostics and care coordination

- Financial Services: Correlating documents, transactions, and behavioral signals to enhance fraud detection and risk assessment

- Quality Engineering: Using logs, screenshots, test artifacts, and telemetry to improve defect detection and root cause analysis

These use cases demonstrate how Multi-Modal AI enables richer insights while reducing manual interpretation and delays.

Generative AI and Multi-Modal Systems

Generative AI has accelerated the adoption of Multi-Modal AI by enabling systems that can generate and reason across text, images, audio, and code. Market forecasts project generative and multi-modal AI to become a multi-hundred-billion-dollar ecosystem over the coming decade.

Market adoption is accelerating rapidly. Bloomberg Intelligence projects the generative AI market to exceed $1.3 trillion by 2032, with multi-modal capabilities cited as a primary driver of enterprise adoption beyond text-based use cases.

Meanwhile, Forrester reports that enterprises using multi-modal AI experience up to 35% improvement in decision accuracy when compared to single-input AI systems, particularly in complex operational environments.

Enterprise Challenges in Adopting Multi-Modal AI

Despite its potential, Multi-Modal AI adoption introduces new challenges:

- Data complexity across formats and sources

- Latency and cost of processing rich media at scale

- Governance and compliance for sensitive multi-modal data

- Model explainability and trust in automated decisions

Despite the momentum, execution remains challenging. A Deloitte survey shows that only 22% of enterprises feel confident in their ability to govern AI systems that consume unstructured and multi-modal data highlighting gaps in observability, explainability, and compliance readiness.

This reinforces why multi-modal AI adoption must be paired with strong governance, responsible AI frameworks, and enterprise-grade architecture.

Narwal.ai Approach to Multi-Modal Enterprise AI

At Narwal.ai, we help enterprises operationalize Multi-Modal AI by combining strong data engineering foundations with intelligent, agent-driven systems.

Our approach focuses on:

- Designing scalable data architectures for multi-modal workloads

- Enabling secure, governed AI pipelines across enterprise ecosystems

- Building agentic systems that reason, act, and adapt responsibly

- Driving measurable outcomes across operations, quality, and decision-making

By aligning AI strategy with execution, we help organizations move from experimentation to enterprise-wide impact.

Explore Multi-Modal AI with Narwal.ai

Ready to unlock the power of Multi-Modal AI and intelligent enterprise systems?

Narwal.ai helps organizations design, deploy, and scale AI solutions that combine data, models, and autonomy securely and responsibly.

References

IDC – Data Age 2025: The Digitization of the World

Gartner – Top Strategic Technology Trends: Multimodal AI

McKinsey & Company – The Data-Driven Enterprise of 2025

Bloomberg Intelligence – Generative AI Market Outlook

Forrester Research – The State of Enterprise AI

Deloitte – State of AI in the Enterprise

Related Posts

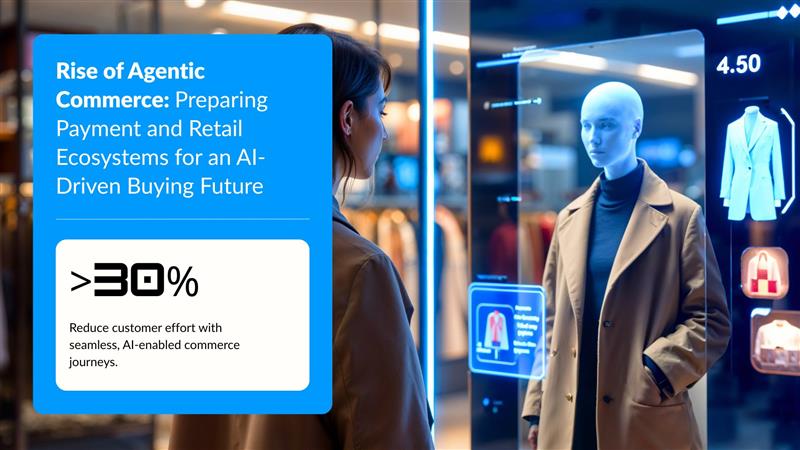

Agentic Commerce: Preparing Payment and Retail Ecosystems for an AI-Driven Buying Future

Digital commerce is entering a transformative phase where transactions are no longer driven solely by human interactions. Intelligent AI agents are beginning to act on behalf of consumers anticipating needs, evaluating options across platforms, negotiating value, and completing purchases with…

- Apr 02

Generative AI : Enabling Intelligent Automation and Enterprise Innovation

Generative AI is rapidly transforming how enterprises innovate, automate, and deliver value. From intelligent virtual assistants to autonomous workflow orchestration, Generative AI is enabling organizations to move beyond traditional analytics and automation toward context-aware, adaptive, and scalable decision-making systems. As…

- Mar 27

Categories

Latest Post

google-site-verification: google57baff8b2caac9d7.html

Headquarters

8845 Governors Hill Dr, Suite 201

Cincinnati, OH 45249

Our Branches

Cincinnati | Jacksonville | Indianapolis | London | Hyderabad | Bangalore | Pune

Narwal | © 2024 All rights reserved